Agentic Design Patterns, Part 1: What Makes an AI System Actually Agentic

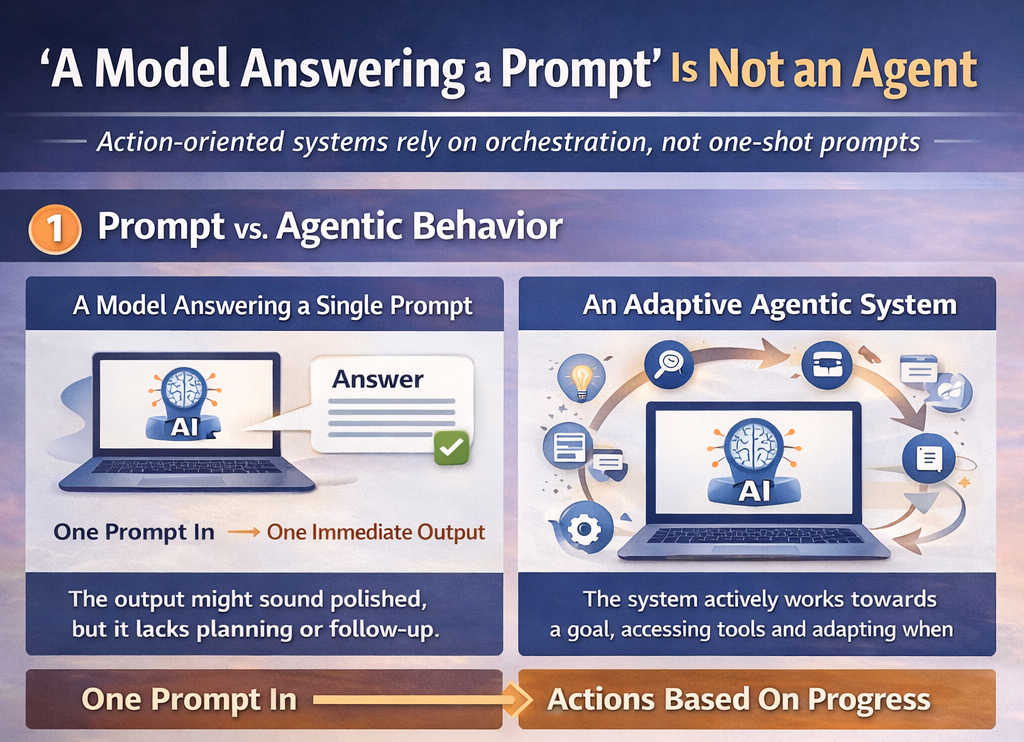

A model answering a prompt is not an agent. The useful distinction is action-oriented orchestration: goals, tools, state, adaptation, and structured control around the model.

A model answering a prompt is not an agent. That confusion is behind a lot of weak AI products. Teams keep expecting one-shot prompting to behave like planning, tool use, recovery, and decision-making, then act surprised when the output sounds polished but falls apart operationally.

The more useful definition of an agentic system is simple: it is a model wrapped in enough structure to pursue a goal, choose actions, access tools or information, track state, and adapt when the environment pushes back. In other words, agentic behavior comes from orchestration, not just from a stronger model.

- Agentic systems are action-oriented architectures, not just clever prompts.

- The key shift is from isolated output generation to goal-directed execution under uncertainty.

- Most real agent failures are systems-design failures long before they are model-intelligence failures.

Why the distinction matters

A one-shot prompt can sound competent while still failing at planning, verification, decomposition, or recovery. That is why treating every AI task like a single-prompt problem creates brittle products. If the model has to reason about goals, choose from several possible actions, track intermediate progress, call tools, and recover from failure, then you are already doing systems design whether you admit it or not.

The book summary makes this point well: model capability alone is not enough. Reliable agents come from reusable patterns that define how prompts, tools, memory, evaluation, and human oversight fit together. That is a much healthier framing than the usual hype cycle around autonomous AI.

What makes a system agentic

- It interprets a goal instead of only responding to a single instruction

- It can choose among actions rather than only produce text

- It accesses tools, APIs, code execution, or external information when needed

- It keeps enough intermediate state to continue coherently across steps

- It adapts based on outcomes instead of replaying the same logic forever

Agentic means the model is embedded in a process that can act, observe, update, and continue toward a goal under constraints.

Agents live between rigid automation and full autonomy

One of the strongest ideas in the summary is that agentic systems sit between traditional fixed workflows and unconstrained autonomous actors. That is exactly right. Good agent design is deciding where flexibility is valuable, where structure is mandatory, and where people need to stay in the loop. You are not trying to make the system maximally free. You are trying to make it useful and controllable at the same time.

That perspective also explains why so many production agents feel underwhelming. Teams often optimize for impressive-looking autonomy without giving the system the architecture required to manage ambiguity, tool latency, exception handling, or partial failure. The result is not an intelligent agent. It is a brittle demo with nicer copy.

The real design problem

The useful way to think about agentic AI is not 'How do I get the model to do more?' It is 'How do I surround the model with enough structure that it can do the right amount of work reliably?' Once the question changes, the architecture changes too. That is where patterns start to matter.

The core mistake in agentic AI is treating model output like system behavior. It is not. Behavior comes from orchestration.

If you want reliable agents, start by designing goals, state, tools, and control loops. The model is only one part of that system.

Ready to try it yourself?

Get started with the tools mentioned in this article. Most have free trials — no credit card required.

Browse Matching Tools ->