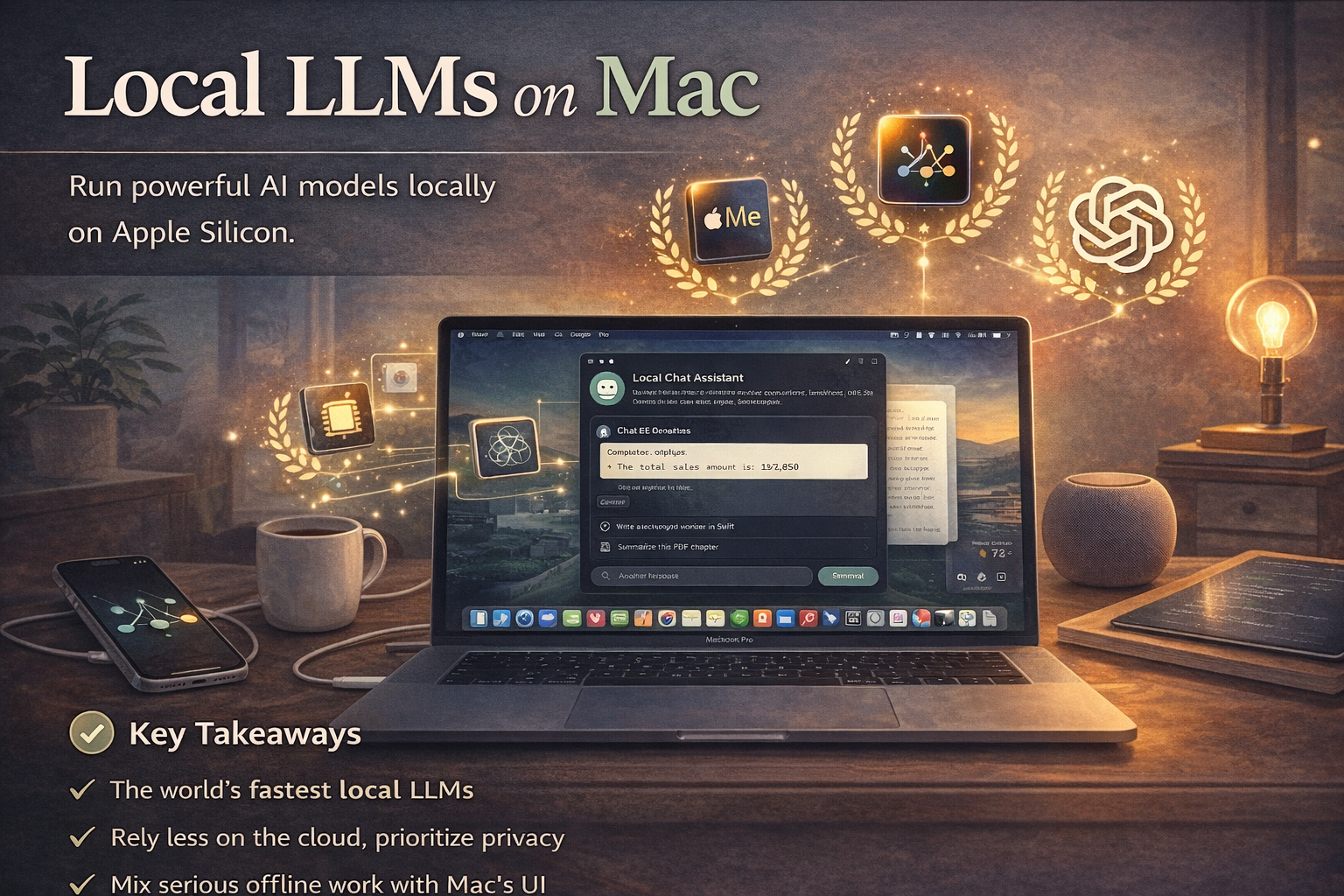

Running Local LLMs on Apple Silicon Macs Is Now a Serious Workflow

Apple Silicon's unified memory and mature Metal tooling are turning local model inference from curiosity into a practical setup for many developers.

Running local models on Apple Silicon is no longer just a hobbyist experiment. For many developers, it is becoming a legitimate workflow option because Apple's unified memory architecture removes one of the main bottlenecks that constrains local inference on typical consumer hardware.

That shift matters for privacy-conscious teams, offline use cases, and developers who want faster iteration on local AI features without routing every experiment through a hosted API.

- Apple Silicon's unified memory architecture makes larger local-model workflows more feasible than many people assume.

- Metal acceleration has matured enough across Ollama, llama.cpp, and MLX to make the Mac a real local-inference platform.

- Treating local LLM setup as a serious workflow means thinking about hardware tiers, memory limits, and installer hygiene, not just model novelty.

Why Apple Silicon changed the equation

A practical optimization guide makes the case that M1, M2, and M3 Macs have turned local inference into something many developers can use productively every day. The core reason is unified memory. Instead of being constrained by the fixed VRAM limit of a discrete GPU and the overhead of moving model weights across a PCIe bus, Apple Silicon lets the CPU, GPU, and Neural Engine share one high-bandwidth memory pool.

That does not make every Mac a frontier-model machine, but it does make local model work more viable than many developers expect, especially for quantized models and well-chosen hardware tiers.

The practical setup guidance is the valuable part

The guide is valuable because it stays grounded in actual setup work. It covers modern macOS requirements, Xcode Command Line Tools, disk-space expectations for quantized models, and the practical roles of Ollama, llama.cpp, and MLX. It also explains why Metal acceleration matters and how memory configuration affects the size of models that remain realistically usable.

- Use Ollama for fast setup and convenience

- Use llama.cpp for more granular tuning and runtime control

- Use MLX when Python-native Apple Silicon research workflows matter most

Security hygiene matters more as local use matures

One of the better details in the guide is its warning about installer hygiene. It recommends verifying the Ollama installer instead of piping a remote script directly into `sh`. That is the kind of operational discipline many local-LLM tutorials skip, but it becomes more important as local model usage moves from curiosity into real developer tooling.

Read the Apple Silicon local LLM optimization guide →Local inference on Apple Silicon is becoming a serious workflow because the hardware, runtimes, and practical guidance have all improved enough to support real use.

The right way to think about it now is not novelty, but fit: hardware tier, model size, privacy needs, and how much local control actually matters to the job.

Ready to try it yourself?

Get started with the tools mentioned in this article. Most have free trials — no credit card required.

Browse Matching Tools ->