RAG vs Fine-Tuning in 2026: Stop Treating It Like a Binary Choice

The strongest AI systems increasingly stop treating RAG and fine-tuning as rivals and instead assign each technique to the part of the workflow it actually fits.

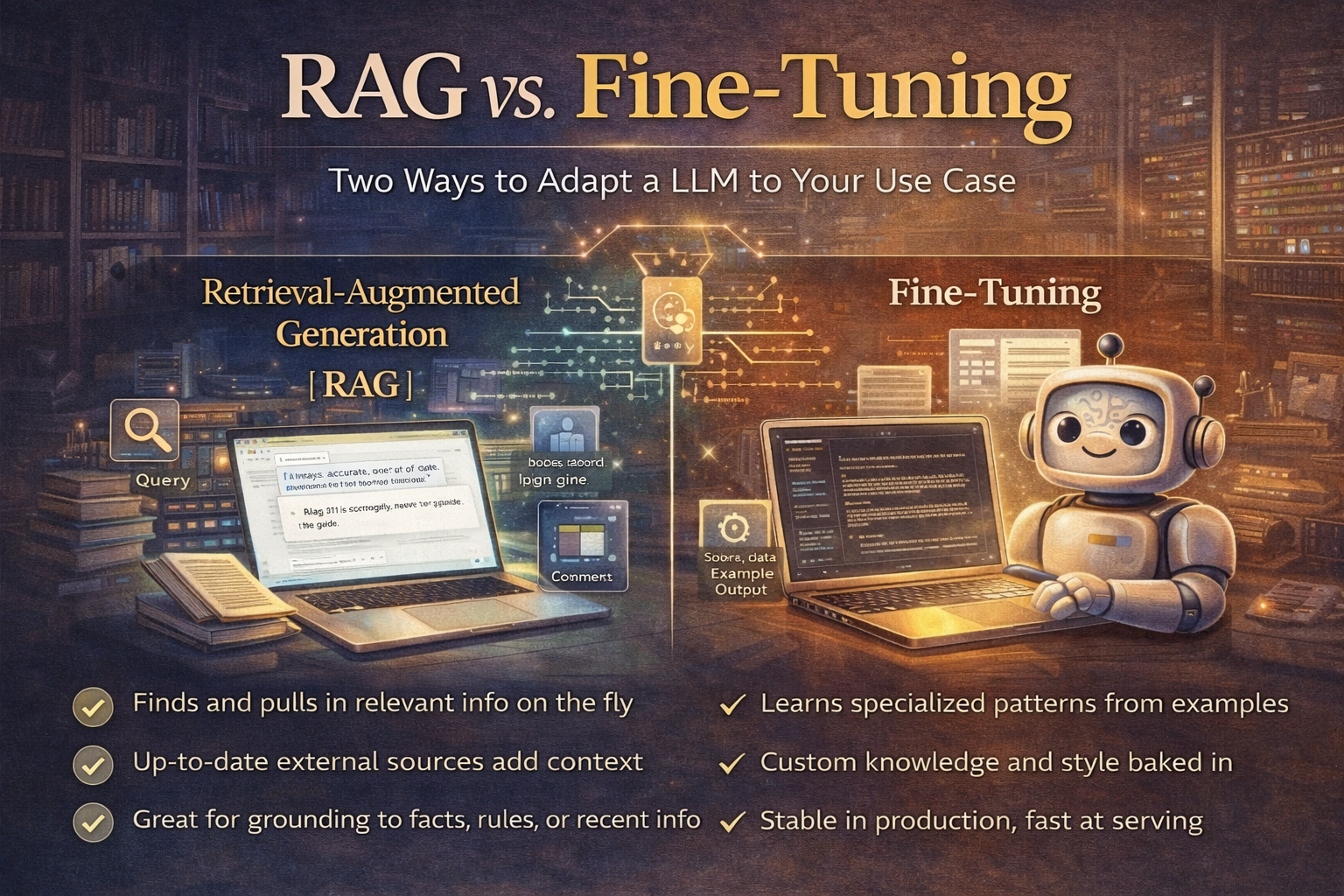

RAG versus fine-tuning is still one of the most common architecture debates in applied AI, but teams often ask the question in a way that creates bad decisions. The issue is rarely which technique is universally better.

The more useful question is what part of the workflow needs live knowledge and what part needs stable learned behavior. Once framed that way, the tradeoffs become much clearer.

- RAG tends to win when knowledge changes often, citations matter, and auditability is required.

- Fine-tuning still makes sense for rigid, repetitive, latency-sensitive tasks with stable patterns.

- The strongest production systems increasingly combine both instead of treating them as mutually exclusive camps.

When RAG is the better choice

Retrieval-augmented generation still has a strong advantage when teams need source-backed responses, deletion-friendly knowledge handling, and access to information that changes frequently. If the assistant must remain aligned to current policy, product documentation, or live enterprise knowledge, RAG is often the more appropriate foundation because it keeps the model's reasoning attached to current external context rather than to frozen weights.

- Dynamic knowledge bases and frequently changing information

- Compliance-heavy environments where citations and audit trails matter

- Multi-team assistants built on shared, current internal knowledge

When fine-tuning still wins

Fine-tuning remains valuable when the task is structurally rigid, high volume, and predictable enough that lower unit cost and faster responses matter more than access to changing context. That includes workloads like classification, extraction, and standardized content generation where behavior needs to stay tight and repeatable.

Use fine-tuning when the job is stable enough that teaching a fixed behavior matters more than fetching fresh knowledge.

The strongest systems are hybrid

The best current systems often stop treating RAG and fine-tuning as rivals. A hybrid architecture can let RAG fetch current facts, policies, or product context while fine-tuning handles tone, formatting, domain-specific response patterns, or behavioral consistency. Once the problem is decomposed that way, the debate stops being ideological and starts becoming architectural.

Read the RAG vs fine-tuning decision framework →RAG versus fine-tuning is a bad binary and a useful systems-design question.

Teams make better decisions when they assign each method to the part of the workflow it actually fits instead of trying to crown one universal winner.

Ready to try it yourself?

Get started with the tools mentioned in this article. Most have free trials — no credit card required.

Browse Matching Tools ->